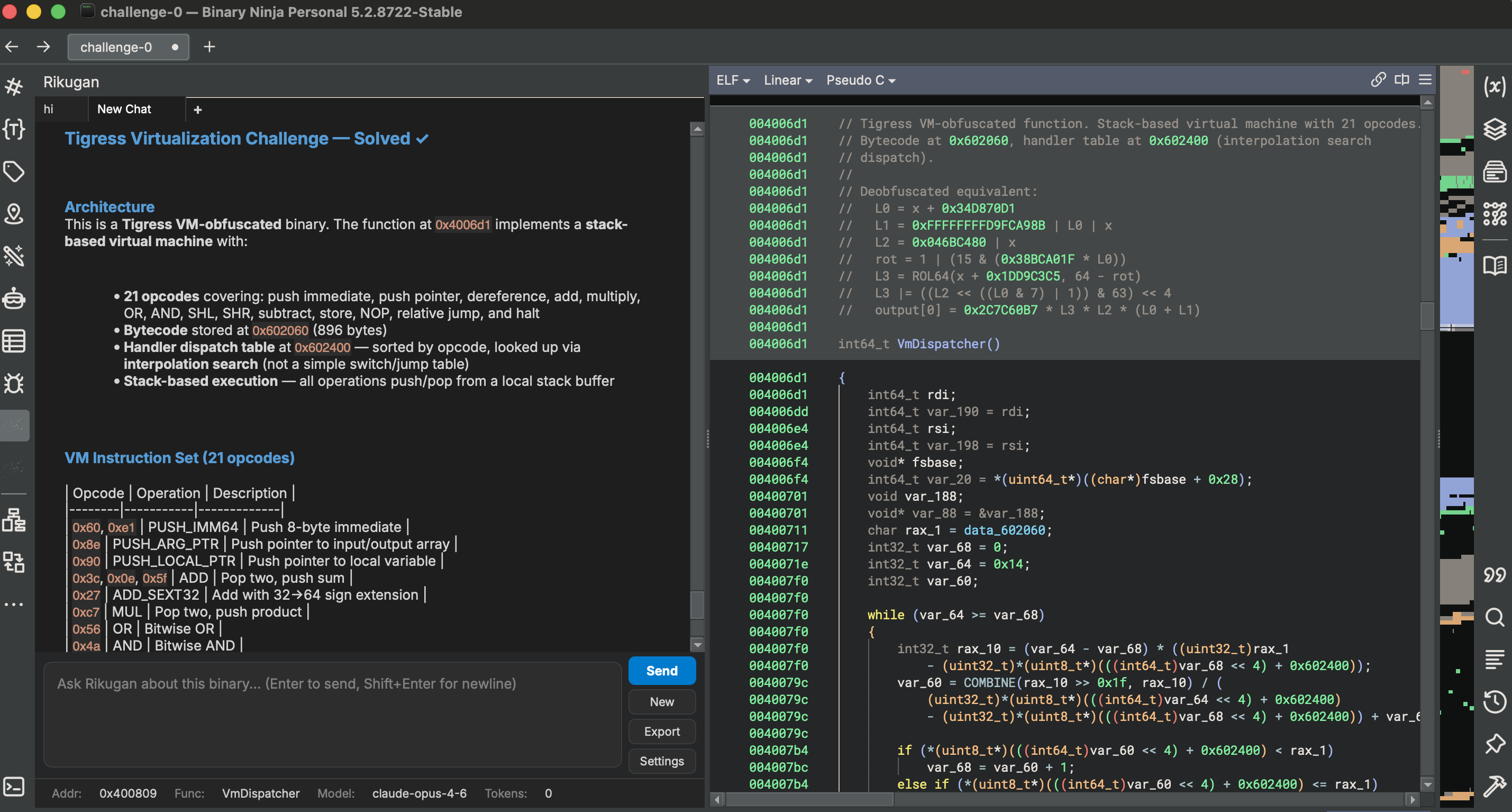

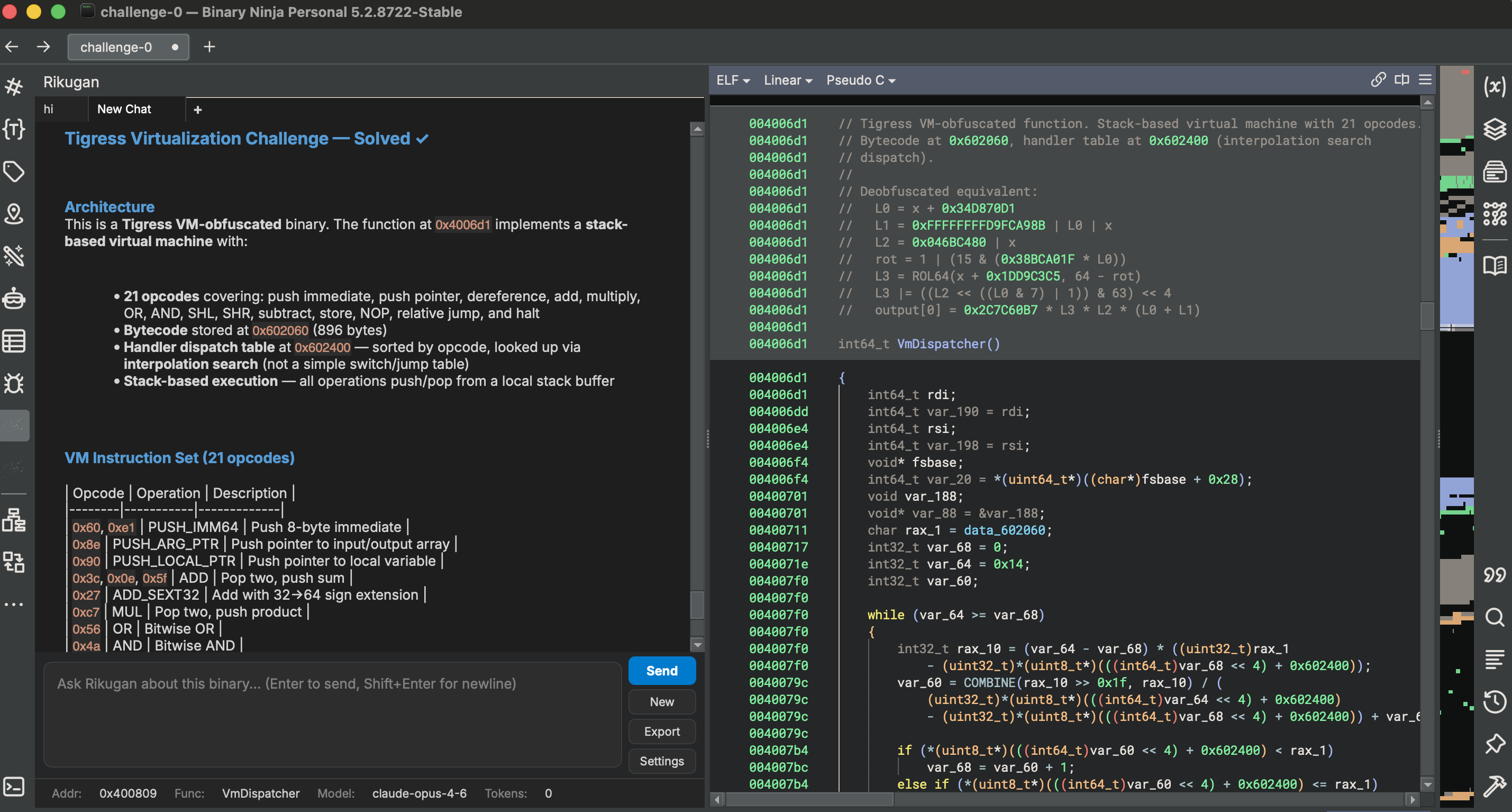

Embedded workflow inside Binary Ninja

Context, chat, and tool access stay attached to the analyst environment instead of shifting to a separate external console.

Advanced reverse engineering assistance directly inside IDA Pro and Binary Ninja. No external consoles, no context switching — just seamless support where you work.

curl -fsSL https://raw.githubusercontent.com/buzzer-re/Rikugan/main/install.sh | bash

Context, chat, and tool access stay attached to the analyst environment instead of shifting to a separate external console.

The operating model stays consistent: direct access to host actions, reversible edits, and an agent loop that lives where the work happens.

Rikugan is a full agent — its own agentic loop, context management, role prompt, and in-process tool orchestration. It doesn't talk to your disassembler through a server — it lives inside it.

Rikugan brings AI assistance directly into your workflow with native tool integration, structured analysis, and complete operator control.

Navigation, decompiler, disassembly, xrefs, strings, annotations, type engineering, microcode/IL read+write, and scripting — all callable by the agent.

Malware analysis, deobfuscation, vulnerability audit, driver analysis, CTF solving, and more. Create your own custom skills too.

Subagents split investigation across isolated contexts and return a consolidated report instead of mixing partial thoughts into one long thread.

Approval gates and reversible mutations keep the operator in control. execute_python always asks permission with syntax-highlighted preview.

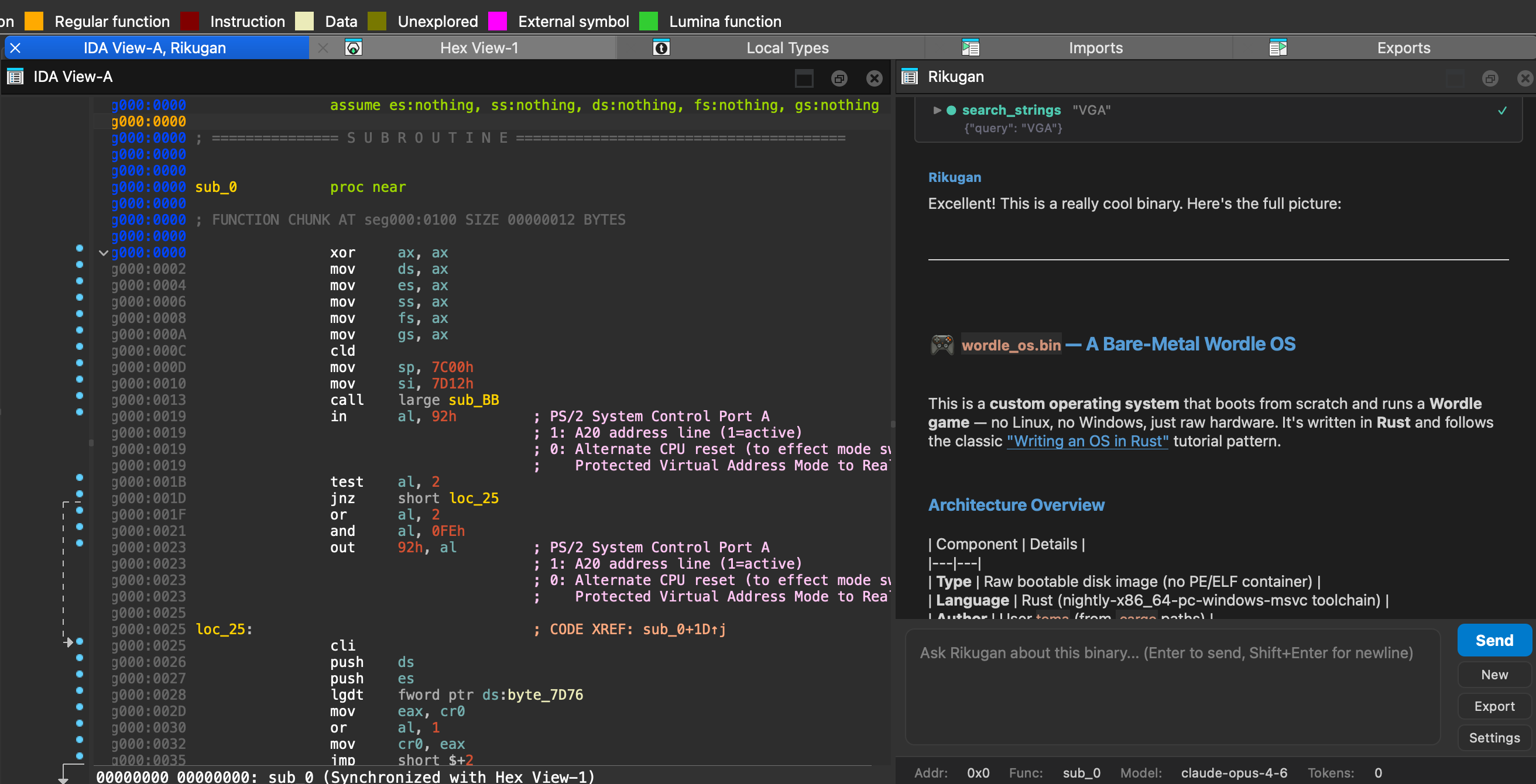

Control what the LLM sees. Deny tools, redact IOCs (IPs, hashes, domains, URLs, wallets), hide binary metadata, and add custom redaction rules — so sensitive research never leaves your machine.

Anthropic (Claude), OpenAI, Google Gemini, MiniMax, Ollama for local inference, and any OpenAI-compatible endpoint. Switch anytime.

RIKUGAN.md stores facts per binary. Findings persist across sessions and restarts. The agent remembers what it learned before.

Type / to see available skills with autocomplete. Create custom skills in your user directory.

Read structures, trace references, inspect IL, and build a map of the binary before proposing action.

Turn findings into a concrete sequence of actions with approval points and expected outcomes.

Apply writes at the IL or byte level with the same tooling used to inspect the target in the first place.

Persist only when the operator approves the final state. Reversibility remains part of the workflow.

Type /explore — the agent maps the binary, then spawns isolated subagents to analyze different areas in parallel.

A binary is code, code is text, and LLMs are good at text. /modify does what agentic coding does for source — on compiled binaries.

/modify make this maze game easy, let me walk through walls

/deobfuscation reads the IL, identifies the technique, and uses IL write primitives to undo it — with your review before every patch.

get_il • get_cfg • track_variable_ssa

il_replace_expr • il_set_condition • il_nop_expr • il_remove_block • patch_branch • write_bytes • install_il_workflow

Profiles let researchers control exactly what the LLM provider sees. Redact IOCs, deny tools, hide metadata — so classified samples and private research stay private.

execute_python, read_bytes) and hide binary metadata entirely. The agent never sees what you don't want it to see.

Switch providers anytime from the settings panel. Supports prompt caching, retry logic, and streaming.

Clone the repo, run the installer, and start reversing with AI.